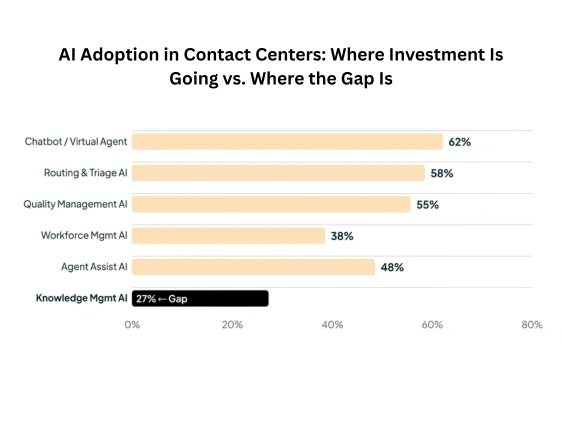

27% of contact centers are currently using AI within knowledge management. Most contact center leaders reading that number will do one of two things: feel quietly vindicated that they’re in the 27%, or feel a sting of recognition that they’re not. Either way, the more pressing question isn’t where you are today — it’s what that gap is actually costing you every quarter.

The math is uncomfortable. If 91% of customer service leaders are under pressure to implement AI in 2026, yet only 27% have extended that AI investment to their knowledge management layer, a structural mismatch is playing out inside thousands of contact centers right now. And it’s the kind of mismatch that compounds.

Table of contents

Why the Knowledge Layer Gets Left Behind

Spend five minutes inside a contact center technology budget conversation and you’ll see the pattern. AI spending goes to the visible, customer-facing layer first: chatbots, conversational IVR, agent assist tools, and quality management. Knowledge management is treated as plumbing, critical but unglamorous, upgraded only when things break.

The problem is that all those front-end AI investments draw from the same well: the knowledge base. A chatbot that can’t find the right answer is just an expensive source of customer frustration. An agent assist tool surfacing outdated policy documents helps no one. AI in customer service statistics for 2026 consistently show that model quality accounts for only a fraction of output quality, the knowledge feeding that model is the real determinant.

Yet organizations continue treating KM as a documentation project rather than a strategic asset. The result is a growing gap between what AI tools promise in the demo room and what they deliver in production.

See how a leading telecom reduced call volume by 40% with Knowmax’s AI-powered knowledge management platform.

The Real Cost of Ignoring KM in Your AI Stack

Let’s put numbers to the problem. Consider what happens at scale when your knowledge management layer isn’t AI-equipped:

- Agents spend 15–20% of each shift searching for information, time that is entirely recoverable with intelligent knowledge retrieval.

- Handle time stays inflated. McKinsey’s research on generative AI productivity found AI-enabled agents achieved a 14% increase in issue resolution per hour and a 9% reduction in handle time, gains that depend entirely on the quality and accessibility of underlying knowledge.

- Self-service fails at the last mile. 61% of customers prefer resolving simple issues via self-service, but that preference collapses when the knowledge base is incomplete, stale, or poorly structured.

- A new agent ramp-up takes longer than it should. When knowledge is scattered across PDFs, wikis, and institutional memory, onboarding new agents is a months-long project rather than a week-long one.

FAQs

Agents waste 15–20% of each shift searching for answers. Handle times stay high, self-service fails, and the AI tools you’ve already invested in underperform, because the knowledge feeding them is outdated or disorganized.

It surfaces the right answer during a live call, not after. It flags outdated content automatically, closes knowledge gaps in real time, and cuts new agent ramp-up time significantly. The result: faster resolutions and lower handle time.

Prioritize: semantic search, real-time agent assist, content health monitoring, and a single knowledge base that powers both agents and self-service. Accuracy of retrieval matters more than any other feature.

Most teams see handle time drop within the first month. Self-service and onboarding improvements show up by month 3. Full ROI typically materializes within 3–6 months — faster if your knowledge base is already in decent shape.

7 Min

7 Min

Word Document)

Word Document) Excel File)

Excel File)